3 Reasons SEL Assessments Aren’t “Just Another Test”

By Jonathan E. Martin

“Not another test!”

This is a common reaction when educators and parents encounter the suggestion that we should begin measuring and assessing social and emotional competencies (also known as noncognitive skills and character strengths) in manners similar to how we test academic achievement and cognitive capacities.

It’s understandable, this reaction. The U.S. saw a massive spike in academic testing of students K-12 in the wake of NCLB, including the high stakes summative tests for accountability and (even more so) a deluge of interim and formative testing to ensure students are prepared and on track for the high stakes testing. As Anya Kamenetz states in her book, The Test: Why Our Schools Are Obsessed with Standardized Testing, “Standardized assessments test our children, our teachers, our schools—and increasingly, our patience.”

Some surveys report that half or more of parents think we already have too much testing, though these numbers can change depending on whom you’re asking and how you phrase the question. (From a comparative international perspective, it should be noted there’s some reason to doubt the widespread perception that U.S. students are terribly over-tested; one recent study concluded “most nations give their students more standardized tests than the United States does.”)

Now the idea of adding SEL assessment has been brought (somewhat suddenly) to national attention, especially due to the new federal law known as ESSA, which calls for the inclusion of non-academic measures to school accountability systems.

But won’t this just worsen the problem of over-testing?

There are three general answers to this question challenging the idea of adding “yet another test.”

First: If we care about whole child education, we can’t let a surfeit of testing in one arena of schooling lead us to determine there should be no testing in any other, equally important arenas. It’s not just a matter of fairness to say that, because we test approximately seven times a year in math and reading, we should test zero times in social skills and emotional intelligence.

It’s really a question of effective, data driven or data informed leadership. As we increasingly recognize the value of informing the continuous improvement of our math and reading programs by careful study of what’s really working, we must realize that we can and should do the same with careful study of our social and emotional programs, using reliable and valid data about the effectiveness of our initiatives and interventions.

Second: Measuring SEL is actually a helpful way to discover if academic (over)testing is having a detrimental effect. If we find students in high testing regimes have poor social and emotional skills, then we will have useful evidence that testing is harming our students. The authors of Learning to Improve recommend schools implement so-called “balancing measures,” which are secondary assessments used as a “check” to ensure that any particular initiative being measured by the “primary measure”, isn’t having unintended negative consequences.

As an example, imagine a school which sought to improve its math scores by dramatically increasing time for worksheet drills and times table memorization. That school might (not certainly) see a small uptick in math scores by one or two points, leading it to believe its improvement effort was successful.

But what if, at the same time, this same school observes in its “balancing measure” a deep dip in collaboration, curiosity and communication skills–each of these declining ten to fifteen points.That changes one’s view considerably; no longer can we see the initiative as worthwhile. But we can only reach such important conclusions with such deep consequences for our students if we employ these “balancing” assessments.

Third: Perhaps most importantly, students view these tests differently: kids report that they genuinely appreciate and value the addition of SEL assessment to the mix.

Students Support SEL Testing

In the past few months, researchers from ProExam have conducted multiple focus groups with students and teachers, sharing sample SEL standardized testing items and asking them for their reactions. The responses were almost universally positive. One California teacher reports that “the students were excited to take a test that asked questions relating to their favorite topic – themselves. They were fascinated to hear that these so-called traits were not fixed but could be changed with deliberate practice. In discussing the importance of conscientiousness and emotional stability, it was as if we had cracked a code that some intuitively understood and others were still trying to figure out.”

Students offered statements of support such as “I think that if I went through a bunch of these questions, then I’d get a better sense of myself,” and “It’d be very interesting to see how things change and you grow over time from year to year.”

Student support for this alternative kind of testing was also observed in a recent pilot study of ProExam’s new SEL assessment tool, TesseraTM. Testing over a thousand high school and a thousand middle school students across 20 schools, researchers found that both the self-report and the situational judgment tests (SJTs) reached acceptable levels of reliability and predicted grades and other educational outcomes in a meaningful and robust fashion. (If you are interested in the fine details of these studies, the researchers are fast tracking their findings using the latest social media for the science community on ResearchGate.)

Added to the end of some of the pilot studies was a quick survey for about 300 students about the testing experience that proved to be quite revealing.

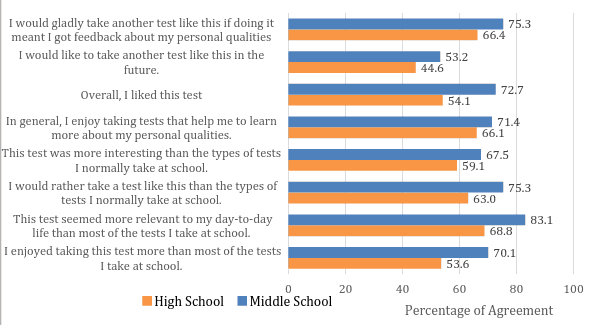

By and large, students responded quite positively to questions about the experience (see the full table below)—and especially so when comparing this kind of testing experience to the “types of tests I normally take at school.” For instance, asked if they’d prefer this kind of assessment to a typical test, 75% of middle school students, and 63% of high school students expressed agreement.

It’s not just a matter of whim or likeability though; the more significant numbers are found when students are asked if this kind of test is more relevant to their lives (83% agreement middle school, 68% high school) and if they’d gladly take tests like this if they get feedback they can use (75% agreement middle school, 66% high school). For students, this is not just another test; it is an assessment of things that matter to them, which they seek to improve and for which they appreciate useful feedback.

It’s not just a matter of whim or likeability though; the more significant numbers are found when students are asked if this kind of test is more relevant to their lives (83% agreement middle school, 68% high school) and if they’d gladly take tests like this if they get feedback they can use (75% agreement middle school, 66% high school). For students, this is not just another test; it is an assessment of things that matter to them, which they seek to improve and for which they appreciate useful feedback.

Asking whether we ought to add any more assessments to what is already a crowded testing environment is an entirely valid and understandable question. And indeed, it makes all kind of sense for policymakers and assessment directors to consider carefully whether to trim the current crush of academic testing and cognitive assessment.

But it is nonsensical to say that because we test in one way too frequently, we shouldn’t assess any other domains at all. Students see the difference, and they value the opportunity to learn more about themselves and to strengthen these key 21st century competencies. SEL assessment deserves a place at the testing table.

This post is part of a blog series on measuring SEL and non-cognitive skills produced in partnership with ProExam (@proexam). Join the conversation on Twitter using #SEL. For more in this series, see:

- Infographic | Why It Is Imperative to Assess Social Emotional Learning

- Getting Smart Podcast | Schools Can (and Should) Measure Noncognitive Skills

- 10 Ways Educators Can Use SEL Measurement and Assessment for Student Success

- Can Grit be Grown?

- Social and Emotional Learning and Assessment: The Demand is Clear

- Schools Really Can (and Should) Measure Noncognitive Skills

Jonathan E. Martin is Strategic Implementation Advisor to ProExam Tessera™ and an educational writer and consultant. Follow him on Twitter: @JonathanEMartin.

Stay in-the-know with all things EdTech and innovations in learning by signing up to receive the weekly Smart Update. This post includes mentions of a Getting Smart partner. For a full list of partners, affiliate organizations and all other disclosures please see our Partner page.

0 Comments

Leave a Comment

Your email address will not be published. All fields are required.